Amid growing constraints on terrestrial infrastructure, NVIDIA hints at orbital computing as a long-term direction for scaling the future of AI.

At NVIDIA GTC 2026, CEO Jensen Huang moved the conversation beyond GPUs and raw performance. Instead, he outlined a broader vision: the future of artificial intelligence will be defined not only by how we compute, but by how computing infrastructure is architected, distributed, and scaled globally.

Within that vision, familiar themes such as AI factories, agentic AI, and Physical AI took center stage. Yet beneath these announcements lies a more subtle but equally significant shift in thinking – one that points toward the possibility of extending compute infrastructure beyond the physical limits of Earth.

While not presented as a formal product roadmap, the notion of orbital or space-based computing reflects a growing industry awareness: today’s terrestrial infrastructure may not be sufficient to sustain the next phase of AI growth.

The Limits of Earth-Bound Compute

AI workloads are undergoing a structural transformation. The industry is moving from a training-centric paradigm to one dominated by inference and, increasingly, reasoning – multi-step, context-aware processes that require continuous, scalable compute.

This shift places unprecedented pressure on existing infrastructure.

Three constraints are becoming increasingly visible. First is energy. Hyperscale AI systems are pushing power consumption toward levels that challenge even the most advanced electrical grids. Second is thermal management. As GPUs and accelerators grow more powerful, the heat they generate strains conventional cooling systems. Third is physical scalability. Expanding data centers on Earth requires land, regulatory approvals, and long development cycles, all of which limit how quickly capacity can grow.

In this context, the exploration of alternative compute paradigms – whether at the edge, in distributed environments, or potentially in orbit – begins to look less like speculation and more like long-term strategic planning.

Processing Data Where It Is Generated

Another factor driving this line of thinking is the changing geography of data.

A growing share of high-value data is now generated outside traditional data center environments – particularly through satellites, remote sensing systems, and global monitoring networks. In the current model, this data must be transmitted back to Earth before it can be processed, introducing both latency and bandwidth constraints.

Conceptually, processing data closer to its point of origin – whether at the edge or in orbit – offers a more efficient alternative. By filtering, analyzing, and compressing data before transmission, systems could reduce network load while enabling faster decision-making in time-sensitive scenarios such as climate monitoring or disaster response.

This reflects a broader shift in computing philosophy: from moving data to compute, to moving compute closer to data.

Engineering Reality vs. Conceptual Vision

It is important to distinguish between conceptual direction and near-term feasibility.

Deploying data centers in space presents formidable engineering challenges. Cooling systems must function without atmospheric convection, hardware must withstand radiation exposure, and maintenance is inherently more complex than in terrestrial environments.

However, these challenges are not entirely unprecedented. Decades of aerospace engineering, along with advances in radiation-hardened electronics and modular satellite design, provide a foundation for thinking about more resilient off-world systems. At the same time, the rapid progress of commercial space companies such as SpaceX and Rocket Lab is lowering the barrier to accessing and operating in low Earth orbit.

From this perspective, orbital computing should be viewed not as an imminent deployment, but as part of a longer-term exploration of how far distributed infrastructure can extend.

The Role of AI Factories and Agentic Systems

Any discussion of future infrastructure must be grounded in how AI systems themselves are evolving.

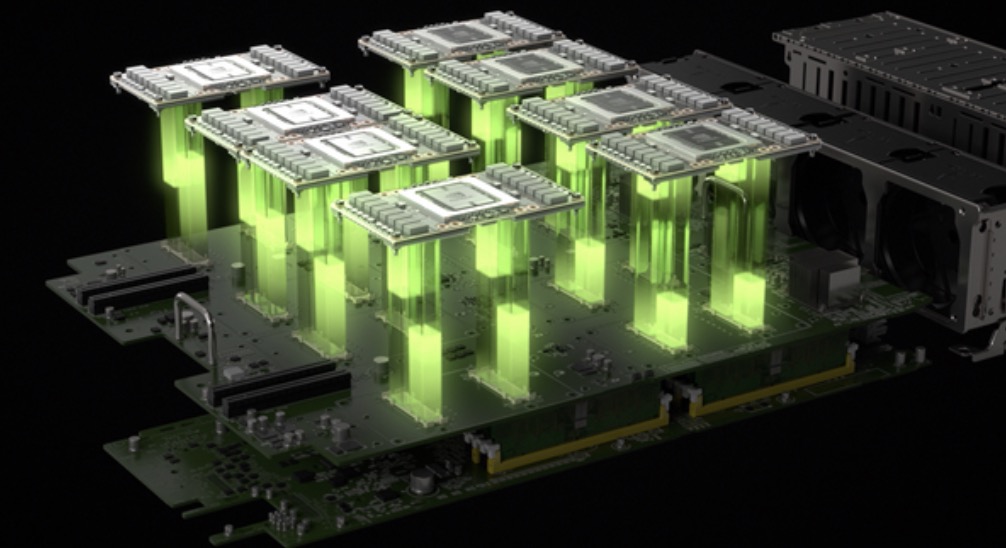

At GTC 2026, Huang described a world built on “AI factories” – systems designed to continuously ingest data, run inference at scale, and generate outputs measured in tokens. These environments represent a shift from traditional computing models to production systems for intelligence.

At the same time, the rise of agentic AI introduces a new layer of orchestration. Autonomous software agents are increasingly capable of managing workflows, coordinating resources, and making decisions across distributed systems.

In such a context, it is not difficult to imagine a multi-layered compute fabric, where workloads are dynamically allocated across cloud, edge, and potentially orbital nodes. In this model, space-based compute – if realized – would not exist in isolation, but as part of a broader, interconnected infrastructure.

Strategic and Economic Implications

Expanding compute infrastructure beyond Earth raises important strategic questions.

For enterprises, it introduces new considerations around workload placement, latency optimization, and resilience. For governments, it touches on digital sovereignty and technological competitiveness. And for the broader industry, it suggests that the total addressable market for AI infrastructure may extend beyond traditional cloud and data center models.

NVIDIA has already framed AI as a trillion-dollar opportunity. If infrastructure evolves toward a more distributed, multi-layered system – including edge and potentially orbital components – that opportunity could expand even further.

A Distributed Continuum of Intelligence

Ultimately, the idea of space-based computing is less about a specific product and more about a shift in perspective.

AI infrastructure is no longer confined to centralized data centers. It is evolving into a distributed continuum that spans cloud, edge, physical systems, and potentially orbital environments. Future system architects may think not only in terms of compute power, but also in terms of latency layers, energy availability, and data locality across different domains – including space.

In this sense, NVIDIA’s messaging at GTC 2026 points toward a broader conclusion: the future of AI will be defined as much by where computation happens as by how fast it is.

Whether orbital compute becomes a practical reality in the near term remains uncertain. But as a direction of thought, it reflects the scale of ambition required to support AI not merely as a technology, but as a foundational layer of the global digital economy.