At its GTC 2026 conference in San Jose, NVIDIA introduced a new generation of chips and data center platforms, signaling a broader push into areas traditionally dominated by Intel and AMD. The announcements span from a dedicated AI inference processor—Groq 3—to large-scale server systems built around its latest CPU and GPU technologies.

Among the highlights is the debut of the Groq 3 language processing unit (LPU), a chip specifically designed for AI inference workloads. This marks a strategic expansion for NVIDIA, which has historically relied on its versatile GPUs to handle both AI training and inference tasks. As the AI industry increasingly shifts toward deploying and running models at scale, demand for specialized inference hardware has grown rapidly.

The Groq 3 chip stems from NVIDIA’s acquisition of Groq’s technology and talent in a deal valued at $20 billion late last year. As part of that move, Groq’s leadership—including founder Jonathan Ross and president Sunny Madra—joined NVIDIA, bringing expertise in high-performance inference computing.

Inference processing is the stage where trained AI models generate responses to user inputs, such as queries submitted to systems like ChatGPT, Claude, or Gemini. While NVIDIA GPUs remain powerful and flexible, the company sees value in complementing them with purpose-built chips optimized for this specific task.

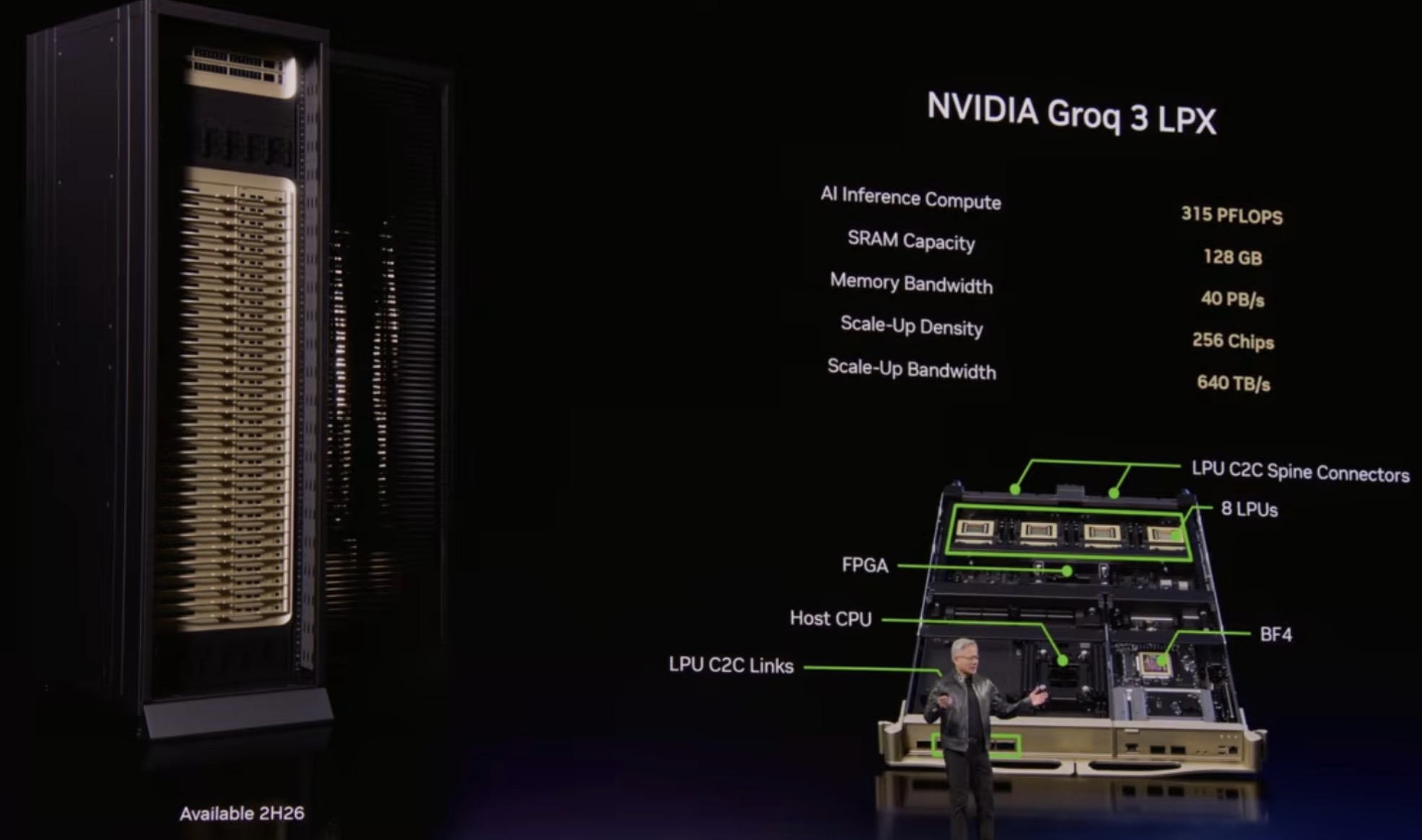

To leverage this, NVIDIA also introduced the Groq 3 LPX platform—a server rack system equipped with 128 Groq 3 LPUs. The company positions this platform as a high-efficiency solution for large-scale AI deployments, particularly when paired with its Vera Rubin NVL72 infrastructure.

According to NVIDIA, combining LPX with Vera Rubin systems can significantly boost performance efficiency, delivering dramatically higher throughput per unit of power and opening up new revenue opportunities for AI service providers. The architecture is designed to handle extremely large AI models, including those with trillions of parameters and extended context windows.

A key advantage of the Groq 3 design lies in its memory speed. While NVIDIA’s GPUs offer larger memory capacity, the LPU architecture focuses on faster memory access, enabling quicker inference operations. By integrating both technologies, NVIDIA aims to strike a balance between capacity and speed.

In parallel, NVIDIA introduced a standalone version of its Vera CPU. Previously part of the Vera Rubin superchip—combining one CPU with two GPUs—the Vera processor is now being deployed independently in dedicated server racks. These systems integrate up to 256 liquid-cooled Vera CPUs into a single platform, targeting high-density, high-performance data center workloads.

Altogether, NVIDIA outlined five new server rack configurations at the event, each tailored to different roles within AI data centers. The company’s broader strategy reflects an effort to maintain its leadership in the AI hardware market, while addressing rising competition from emerging players focused on inference acceleration.

With Groq 3 and its expanded server ecosystem, NVIDIA is positioning itself not just as a GPU leader, but as a comprehensive infrastructure provider for the next phase of AI computing.