From AI factories and agentic software to robotics and space-based data centers, the keynote by Jensen Huang at NVIDIA GTC outlines a comprehensive blueprint for the infrastructure and ecosystem powering the next decade of artificial intelligence.

At first glance, the keynote delivered by Jensen Huang at NVIDIA GTC 2026 looked like a familiar spectacle: a charismatic CEO on stage, a cascade of new chips, software platforms and robotics demos, and a crowd of developers eager to see what comes next.

But beneath the theatrics, Huang’s presentation revealed something more profound: a coherent blueprint for the next decade of artificial intelligence.

The keynote was not simply about GPUs or even AI models. Instead, it outlined a systemic transformation of computing itself — one that spans infrastructure, software architecture, economic models and even physical robotics. If interpreted carefully, the themes Huang emphasized point toward five structural shifts that could define how AI evolves over the next ten years.

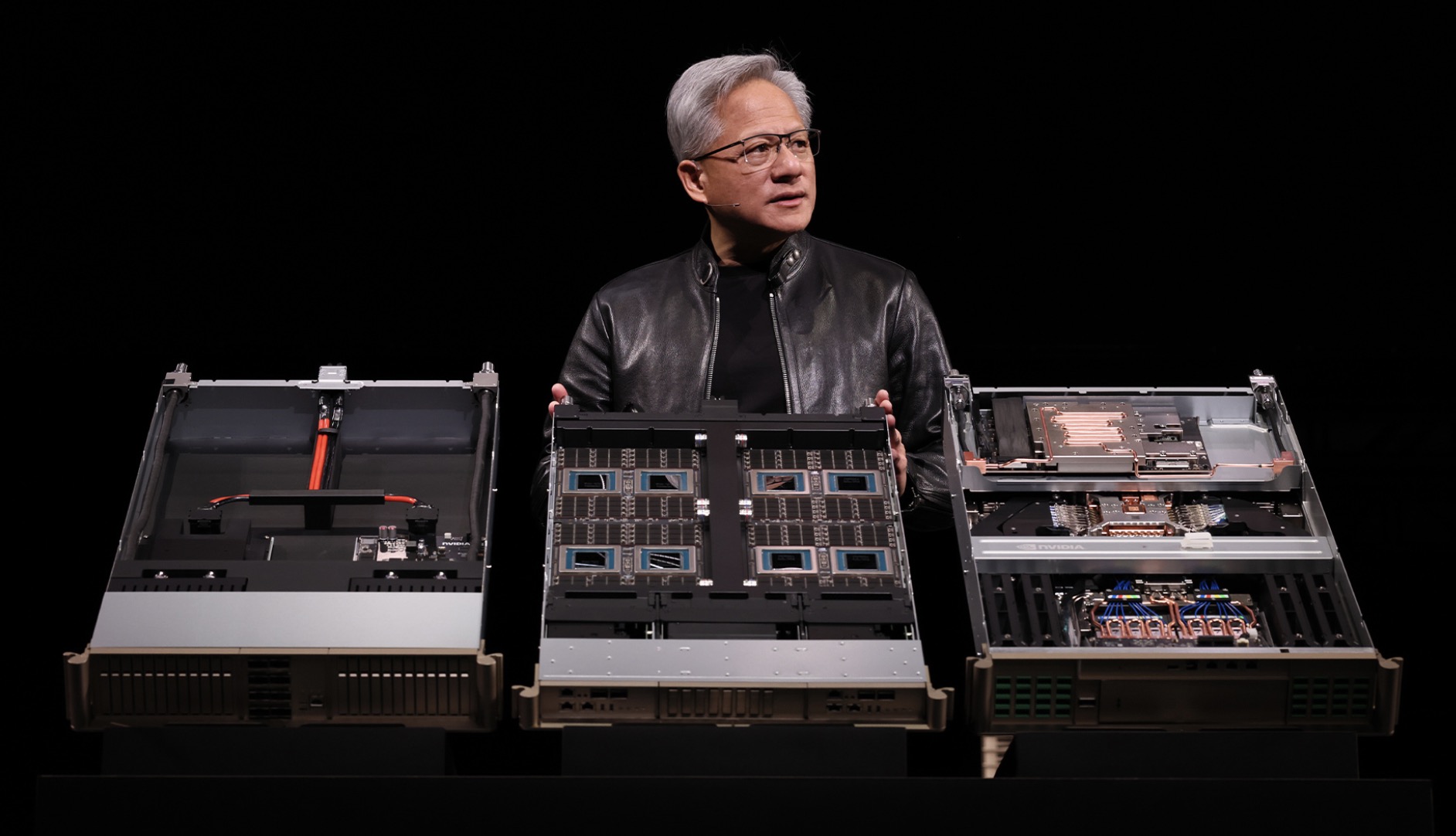

The Transformation of Data Centers Into AI Factories

One of the most important ideas Huang introduced is that the traditional concept of a data center is already obsolete.

Historically, data centers existed primarily to store and process enterprise data. But Huang reframed them as something fundamentally different: industrial-scale facilities designed to generate AI output. Increasingly, their core product is not storage or compute cycles but AI tokens — the atomic units of inference.

In other words, the modern data center is evolving into what Huang calls an AI factory.

These factories ingest massive datasets and produce reasoning, predictions, images, software code and automated decisions. Their economic model resembles manufacturing more than traditional computing infrastructure.

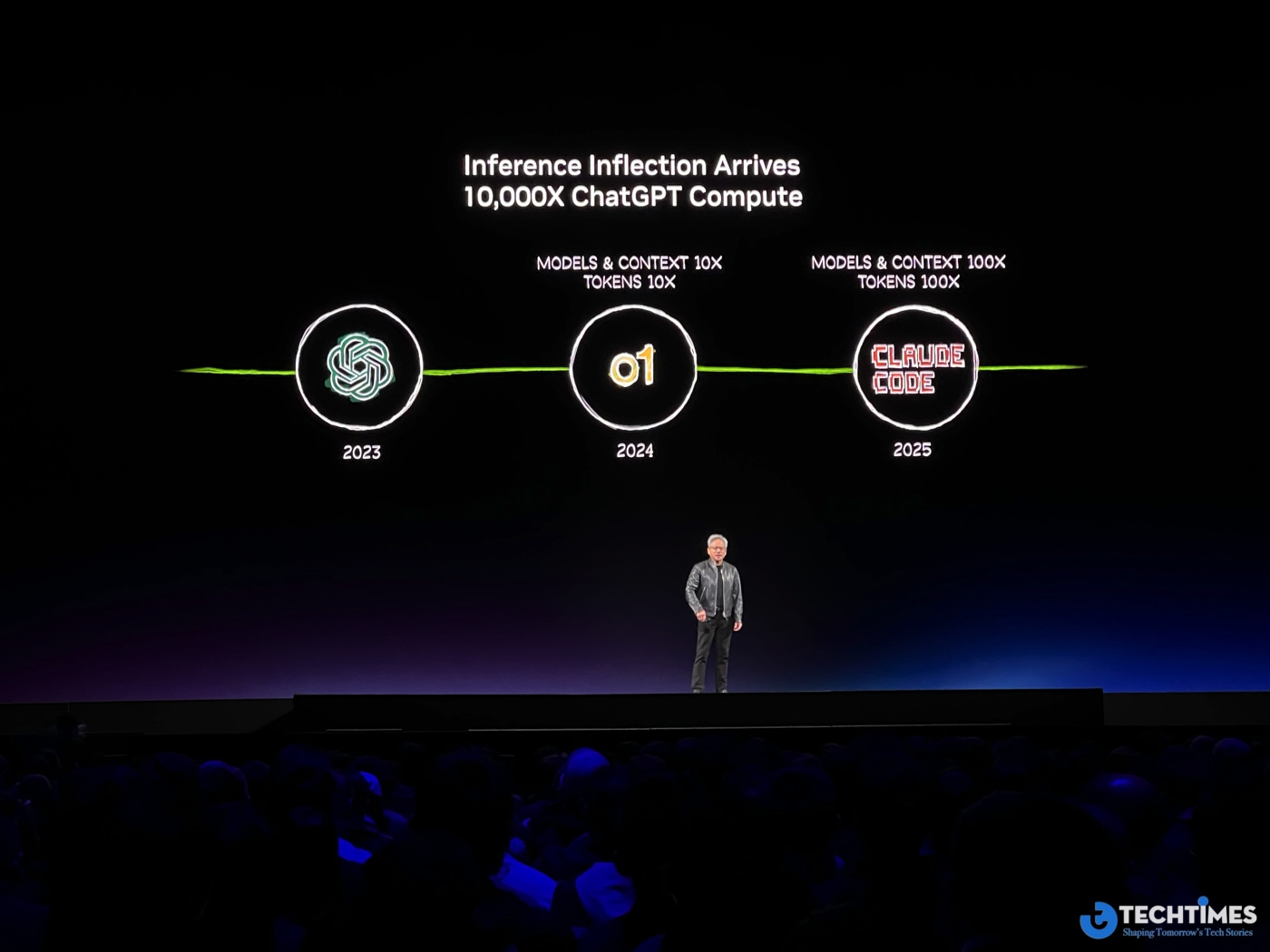

This shift also explains why the scale of AI infrastructure is expanding at an unprecedented rate. NVIDIA estimates that demand for AI compute could surpass $1 trillion in the coming years, driven primarily by inference workloads rather than model training.

Inference — the act of running AI models to produce answers — was referenced repeatedly throughout Huang’s keynote, signaling that the next phase of AI competition will revolve around how efficiently companies can generate tokens at massive scale.

In that sense, AI factories are not just a new data center architecture. They are the industrial backbone of the AI economy.

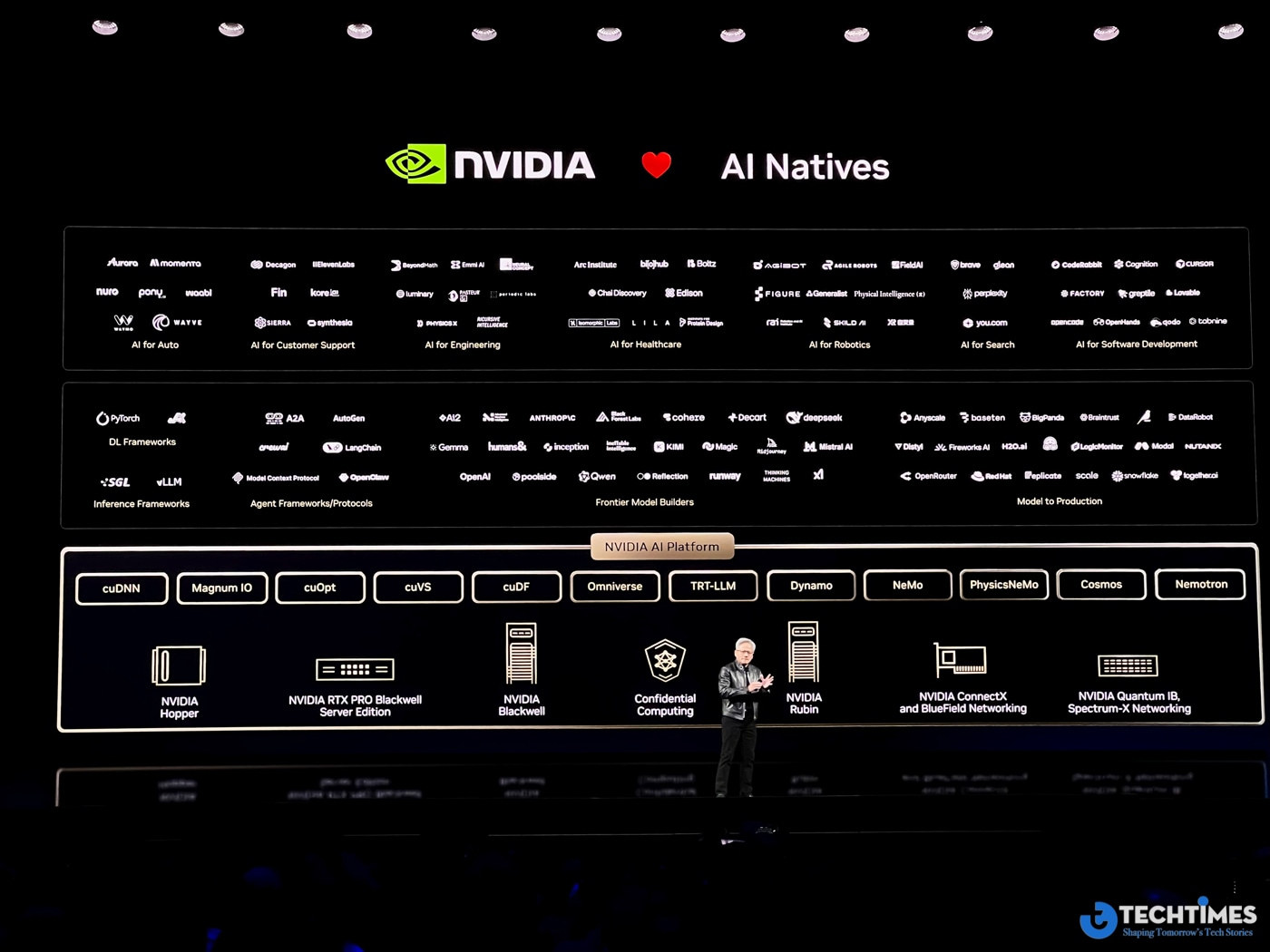

The Rise of Agentic Software

If AI factories represent the infrastructure layer, the next transformation occurs at the software layer.

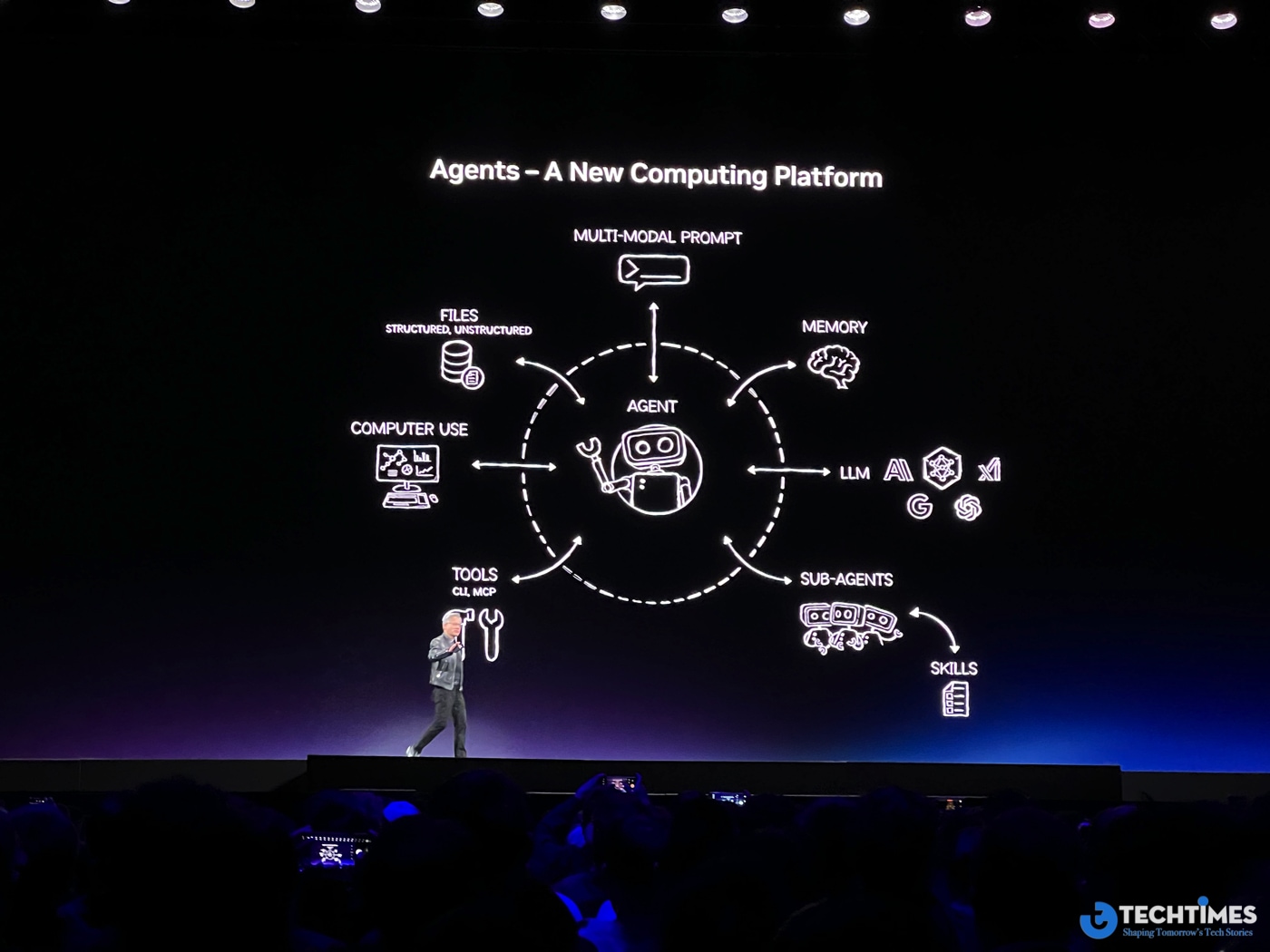

Huang repeatedly highlighted the emergence of agentic AI — autonomous systems capable of planning, reasoning and executing tasks across multiple tools and environments.

A key example discussed during GTC was the explosive growth of OpenClaw, an open-source framework for building AI agents. The platform allows developers to create agents that can decompose complex problems, schedule tasks, interact with software systems and collaborate with other agents.

NVIDIA’s own enterprise adaptation of the framework, called NemoClaw, adds security layers such as network guardrails and privacy routing to make agentic systems viable inside corporate environments.

Huang compared the significance of agent frameworks to foundational technologies like Linux and HTML. The implication is clear: software may soon shift from traditional applications toward ecosystems of autonomous agents.

In this model, a company’s digital workforce could include thousands — or even millions — of AI agents operating alongside human employees.

If that vision materializes, enterprise software itself may be redefined.

The Physical AI Revolution

For the past decade, most AI development has focused on digital domains: language models, search engines and recommendation systems. But Huang made it clear that the next frontier is the physical world.

He described the current moment as the dawn of Physical AI — intelligent systems that interact directly with real-world environments through robotics, autonomous machines and industrial automation.

Training such systems presents a major challenge. Real-world data alone is insufficient because physical environments are unpredictable and full of rare edge cases. NVIDIA’s solution is to combine real data with massive amounts of synthetic simulation data.

Through platforms like Omniverse and Isaac Lab, developers can train robots in large-scale simulated worlds before deploying them in reality. These simulation environments generate the diversity of scenarios required for robust machine learning.

The approach effectively turns simulation into a data factory for robotics.

This strategy is already being adopted by robotics companies building humanoid robots, autonomous vehicles and industrial automation systems. If successful, it could accelerate the deployment of intelligent machines across manufacturing, logistics and healthcare.

Expanding the Boundaries of Compute

Another theme of Huang’s keynote pushed the limits of where computing can physically exist.

Among the most surprising concepts discussed was the possibility of space-based data centers — AI infrastructure operating in orbit rather than on Earth.

While the idea may sound futuristic, the rationale is surprisingly practical. Earth-based data centers are increasingly constrained by land availability, power consumption and cooling requirements. In space, solar energy is abundant and infrastructure can scale differently.

However, space computing introduces entirely new engineering challenges. Traditional cooling methods rely on conduction and convection — mechanisms that do not function in vacuum. As Huang noted, cooling in orbit would have to rely primarily on radiation, forcing engineers to rethink data center design from first principles.

Whether or not orbital data centers become mainstream, the concept reflects a broader reality: the AI infrastructure boom is pushing the boundaries of what constitutes a computing platform.

Compute as the New Strategic Resource

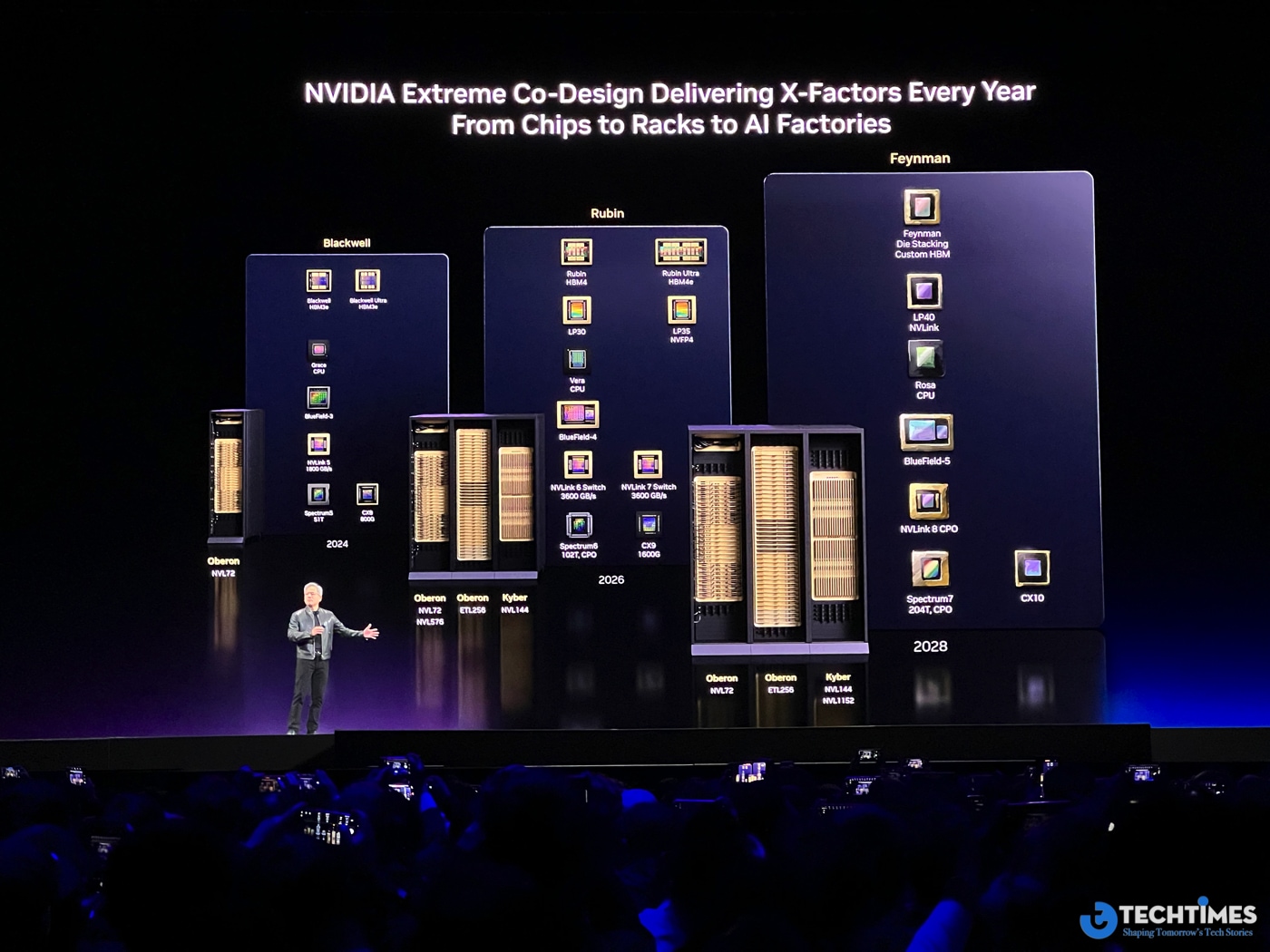

Underlying every theme in Huang’s keynote is a simple but powerful idea: compute is becoming the fundamental resource of the AI age.

Just as electricity defined the industrial era and data defined the internet era, compute capacity is now the limiting factor for innovation in artificial intelligence.

Demand for computing power has already increased dramatically in recent years, and the growth trajectory suggests that AI infrastructure will continue expanding at a historic pace.

This explains why NVIDIA’s strategy is no longer focused solely on GPUs. The company is building an entire ecosystem — from chips and networking to simulation platforms, robotics frameworks and AI software stacks.

The goal is not merely to sell hardware. It is to power the operating system of the AI economy.

The Road to the Next AI Era

Taken individually, the announcements at GTC 2026 may appear to be incremental improvements in hardware, software and research.

Taken together, they form something more significant: a long-term architecture for the AI-driven world.

In this architecture, AI factories generate the tokens that power digital intelligence. Agentic software transforms applications into autonomous systems. Physical AI brings intelligence into machines and robotics. Simulation platforms produce the data required to train them. And compute infrastructure expands across cloud, edge and possibly even space.

What Jensen Huang presented on stage was not just a keynote. It was a roadmap for how computing itself may evolve over the next decade.